LUGANO CITY

Role-Based Performance Evaluation System

Translating a multi-phase HR evaluation process into a structured, role-aware desktop platform.

Designed within a public administration environment, the system required structured governance, transparent decision flows and clearly defined multi-role process control.

This project focused on transforming complex evaluation logic into a coherent, interaction-driven system supporting employees, managers and HR simultaneously.

Project Scope

Designing the interaction architecture for a multi-role evaluation process including:

• Phase-based workflows

• Weighted objective scoring (100% constraint logic)

• Role-based views (Employee / Manager / HR)

• Status tracking and validation states

• High-fidelity interactive desktop prototyping for stakeholder alignment

Role: UX/UI Designer – End-to-End Responsibility

Scope: Process Architecture · Interaction Design · High-Fidelity Prototyping (Figma)

Project Context

Desktop-First Enterprise UX Environment

• HR-driven internal system

• Multi-role approval logic

• High data density and governance constraints

• Process transparency requirements

Product Challenges

Multi-phase evaluation process with fixed timelines

Role-based responsibilities (Employee, Manager, HR)

Weighted objective logic (100% constraint rule)

High data density in desktop environment

Need for transparency and process clarity

HR override and validation states

Project Objectives

Translate complex HR business logic into a structured interaction system

Reduce cognitive load in multi-step evaluation workflows

Enable clear role separation while maintaining system coherence

Provide real-time validation feedback (scoring & percentage logic)

Create a high-fidelity interactive prototype for stakeholder alignment

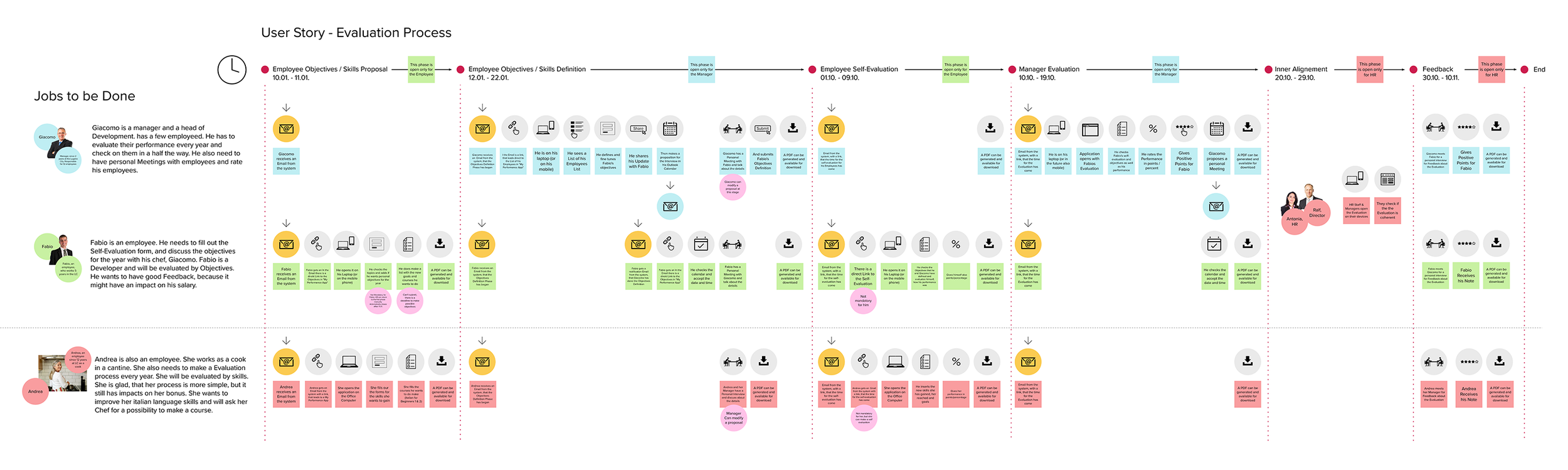

Interaction Architecture

Before designing individual screens, the evaluation process was translated into a structured interaction architecture defining phases, role transitions and validation logic.

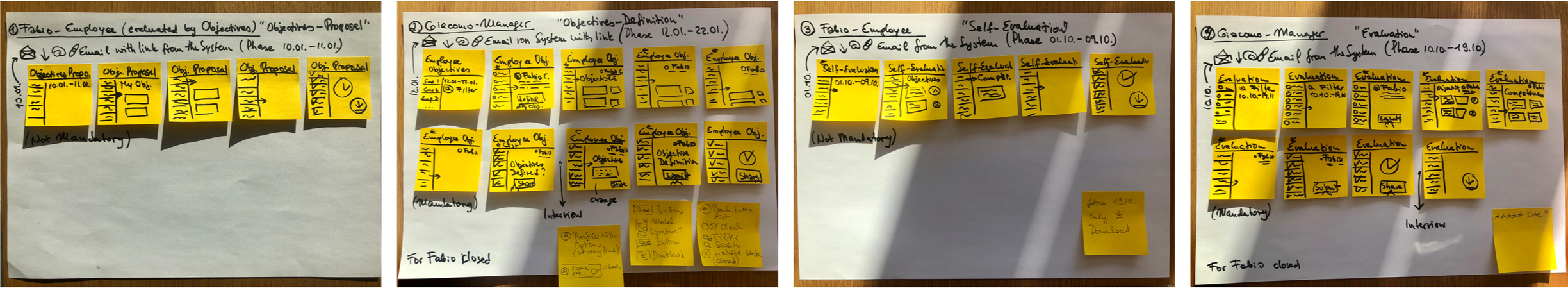

Early Process Exploration

To structure the multi-phase evaluation logic, early interaction flows were mapped through low-fidelity paper prototypes before translating them into digital wireframes and interactive desktop prototypes.

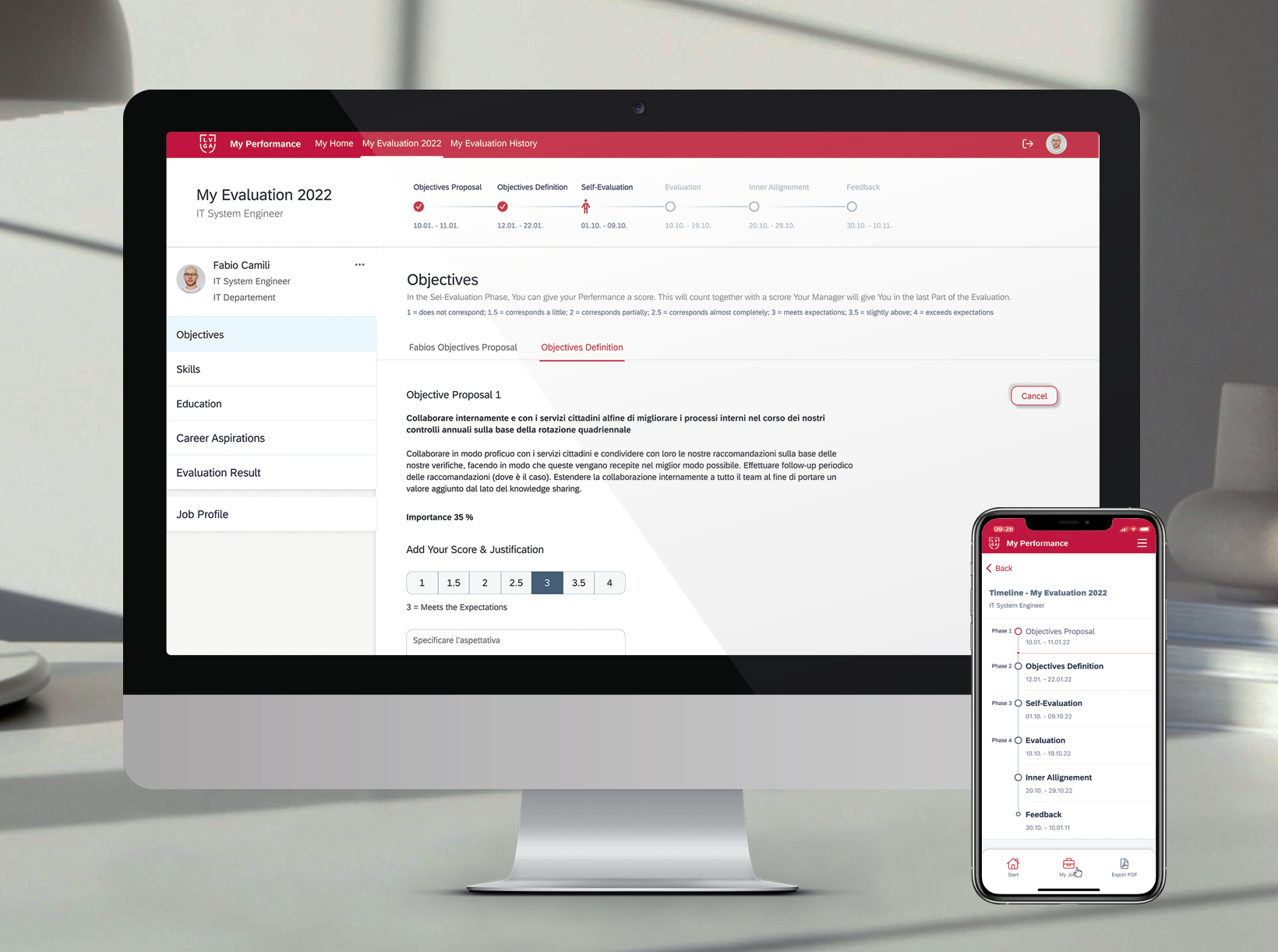

High-Fidelity Prototype

Phase-Based System Architecture. The evaluation process was structured into four core system phases, each governed by role-based permissions, timeline constraints and validation logic. A fully interactive, end-to-end prototype was developed to simulate the complete evaluation lifecycle across roles and system states. The screens shown below represent selected excerpts from a significantly larger clickable evaluation environment.

Phase 1 — Objectives Proposal (Employee)

Employees initiate the evaluation cycle by defining objectives within a fixed percentage allocation model (capped at 100%), subject to deadline control.

Phase 2 — Objectives Definition (Manager)

Managers refine and validate objectives, ensuring alignment with governance constraints while maintaining traceable version states.

Phase 3 — Self-Evaluation (Employee)

The self-evaluation phase integrates weighted scoring with qualitative justification, feeding into the overall evaluation calculation.

Phase 4 — Manager Evaluation & Validation

The final evaluation phase enables structured review, score validation and HR override control within a role-based desktop environment.

Mobile Version

Mobile views were intentionally simplified to support status awareness and essential interactions, while complex validation and high-density data workflows remained desktop-focused.

Conclusion & Key Takeaways

One of the key learnings was the value of investing heavily in user flow architecture at the beginning.

Re-aligning the team around a shared process model reduced ambiguity and enabled a coherent, role-driven system design.

Mobile support was provided for visibility and essential actions, but the complexity of the evaluation logic required a structured desktop environment.

This project strengthened my approach to enterprise UX: structure first, interaction second, interface last.

This project reinforced my belief that clarity in process architecture is the foundation of any successful enterprise system.